I strapped a ChatGPT-powered monocle to my face for a week

How the AI chatbot can help (or not help) you navigate your daily life

AI might not be ready to take over the world just yet, but it’s coming for your face. Brilliant Labs have been tinkering around with this smart AR monocle, that hooks up to the ChatGPT AI chatbot.

The new wearable brings you the power of AI brains straight to your face, letting you talk to the chatbot and view its responses.

But how does it fare out in the real world? Can access to an AI-powered chatbot at all times help you navigate your daily life? Or will it actually make things harder? To find out, I strapped Brilliant Labs’ ChatGPT monocle to my face and wore it for a week using the basic chatbot functionality. We didn’t hack around with any third-party apps this time around – it’s just pure chatbot goodness.

- Read more: Can I outsource my life to AI?

So, what is this ChatGPT-powered monocle anyway?

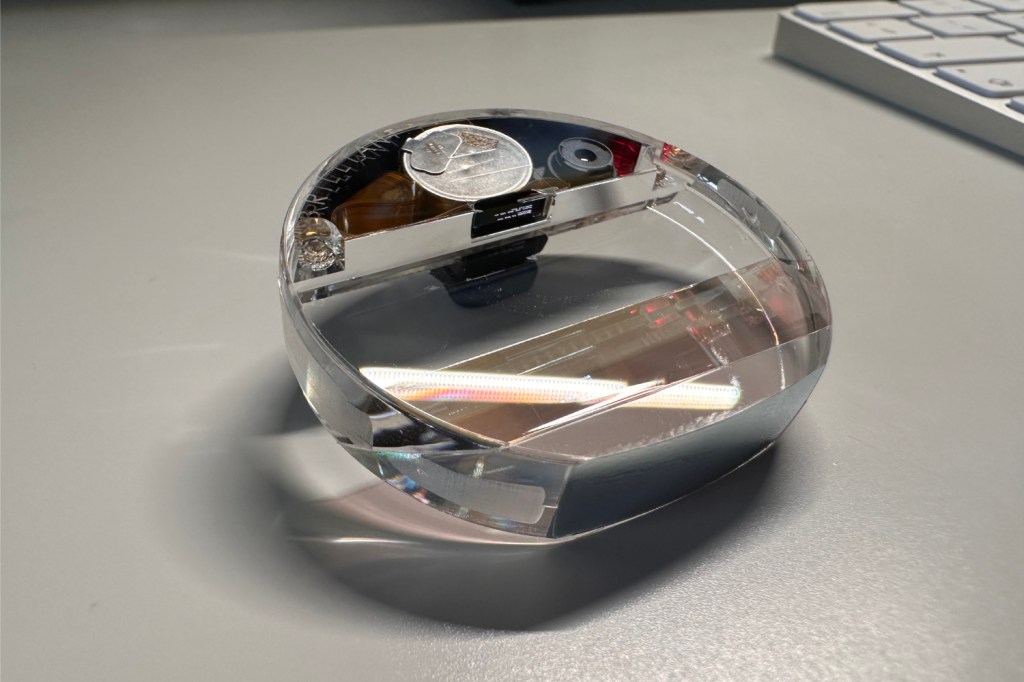

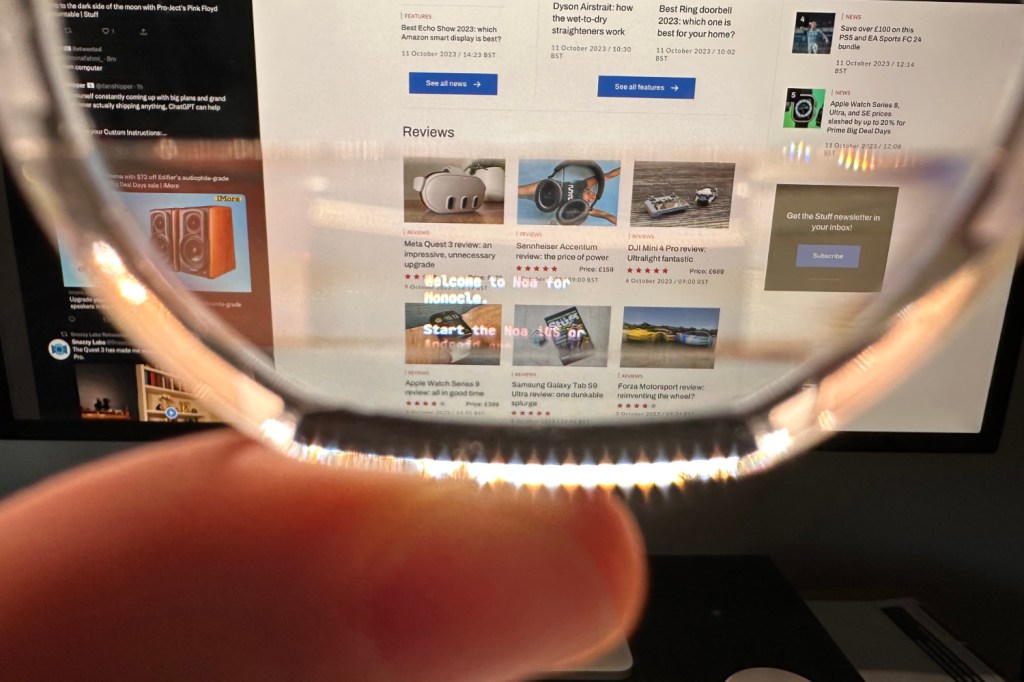

Brilliant Labs’ smart monocle lets you use AI chatbots as a tool in your daily life. Rather than having to type out questions into a keyboard or your smartphone, you can talk through the monocle. You’ll then see written responses via the embedded AR screen, right in front of your surroundings. The monocle plugs straight in to OpenAI’s ChatGPT for all its brainy help. There’s a 720p camera built-in, alongside the microphone, touch sensors, and display.

You could use this to ask for prompts during an interview, or to help with translations on a trip abroad. But there are a few hoops you’ll need to jump through. To use the monocle, you’ll need to download Brilliant Labs’ Noa app to your mobile. You’ll also need to access the ChatGPT API, which requires some technical know how.

What happened while wearing the monocle for a week?

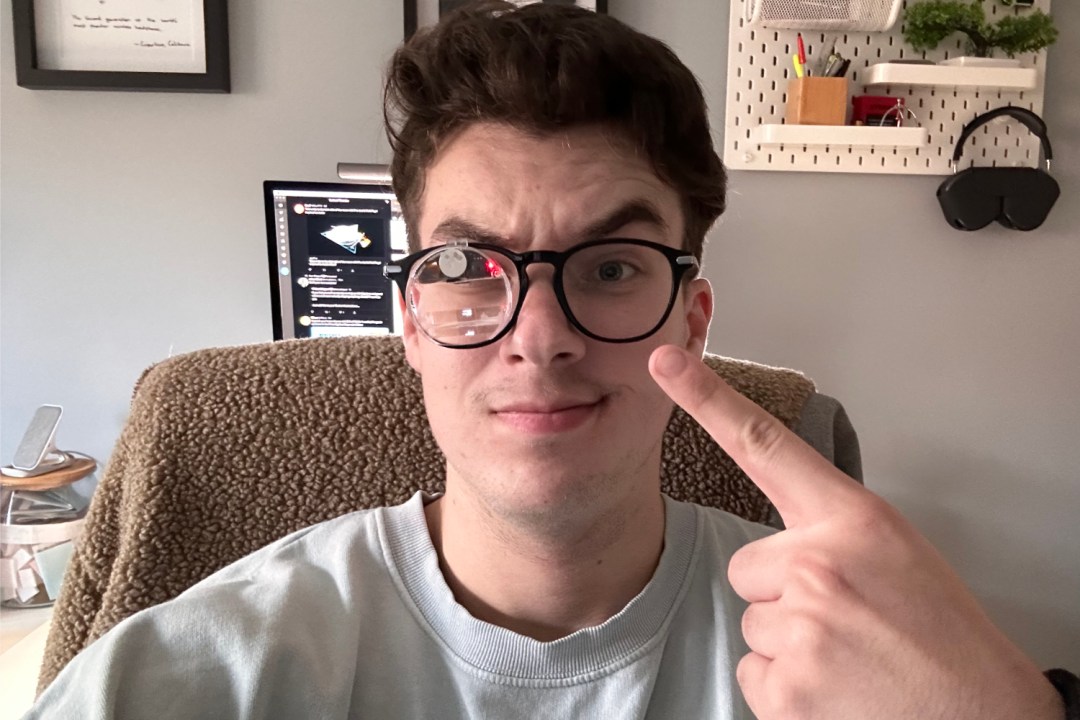

For the past week, I’ve made sure to strap the ChatGPT monocle to my face for every outing, day at the desk, and trip to Tesco. Since it hooks on to your glasses, I’ve essentially worn the wearable whenever I want to see – which is most of the time.

Right off the bat, it’s not the most ideal form-factor for a wearable. Brilliant Labs have taken a one-size-fits-all approach here, so the actual monocle is smaller than my glasses’ lens. This means I have to peer past some of the inner workings to actually see out the lens. It’s something that could be tweaked with further design revisions. Attention also needs to be paid to the weight. While the monocle won’t pull your glasses down too much, it is some noticeable heft on your ear. And my glasses may have slid off as I looked down once or twice.

The other big element is the companion app. You need to use the Noa app on your phone for the ChatGPT requests to go through to the cloud. While you do need to leave the app open at all times, it does still seem to work if you dim your smartphone’s display, and will work in your pocket. You’ll obviously need some sort of Wi-Fi or data connection as well.

When it comes to using the monocle, things are a bit mixed. You can tap the top of the monocle to get it to start listening, and then talk straight into it. It takes a few seconds to process what you’ve said, and then a while longer to spit out ChatGPT’s response. I think the longest I had to wait for a reply was around 30 seconds, though this was for a more complex request.

What did I use the monocle for, you ask? This week coincided with a trip to Berlin, so I took the monocle there on the first day. Airport security were a little amused by the device, but didn’t seem all that concerned. When arriving in Berlin, I tried out some translation using the monocle. I asked ChatGPT to translate a phrase from a sign in the airport, mustering up my best German pronunciation. It worked, and got the translation right (I think – I ended up in the right place, at least). But it took far too long. I was stood around for a couple minutes through this ordeal, likely looking somewhat lost. It’s certainly not capable enough for real-time translation in a conversation.

Other tasks I used it for included asking for simple answers to questions, mainly date checking, and other things you’d usually use a quick Google search for. As long as what I was looking for was pre-2021, ChatGPT was able to help out. Away from my desk, I can’t honestly say I found myself using the monocle much in real-life. One time, I did ask it what a certain ingredient was in Nutella while in Tesco, but that was about it. I also mocked a job interview to try and get some AI help, but ChatGPT didn’t seem very eager to give me the “ideal” responses to each question.

Perhaps I’m the wrong type of person to take an AI assistant around with me. I hardly ever use Siri for requests, beyond controlling the lights at home. So maybe the monocle isn’t quite for me. However, I think the tricky part lies a bit deeper than this. For a product that you need to shell out a few hundred dollars for, it’s a bit clunky. Brilliant Labs embraces this as part of their charm, but a consumer-ready version would need lots of fine-tuning to get people to buy it.

Better yet, this display should be built-in to smart glasses, or items you’re already wearing. Where’s the ChatGPT app that supports voice commands on smartwatches? Why don’t Meta’s smart glasses pack an AI assistant? Pack these brains into something people are already wearing, and you might have a more compelling case. I’d be happy to carry round AI on my face if it came as part of my glasses. But until then, I think it’s time to retire the monocle for now.