What is ChatGPT? The AI chatbot explained

All you need to know about the popular AI chatbot

ChatGPT is a conversational AI language model developed by OpenAI. It uses deep learning algorithms to generate human-like responses to text inputs, allowing it to converse on a wide range of topics in a natural and engaging manner.

And ChatGPT wrote that paragraph itself. But how? What makes ChatGPT tick? And should you be scared? Here, we answer those questions, and a few more.

What is ChatGPT?

ChatGPT, which you can access through its website and app, is one of a new generation of chat programs that exist as user-friendly front-ends to the deep-learning models we’ve come to call ‘AIs’.

It was launched in December 2022, and in simple terms is capable of parsing a question or statement given to them, and of responding in natural language.

Ask ChatGPT a question based in fact, such as ‘how old is George Clooney?’, and it will reply with a factual answer. Sometimes, it’ll reply with an emotional or philosophical answer. It can help draft an email to a client or, if fed enough information, draft a University essay.

What ChatGPT isn’t, is Skynet.

ChatGPT comes from OpenAI, a research lab in San Francisco that has both non-profit and for-profit branches. It was founded in 2015 by a consortium that included Elon Musk, Infosys, Amazon Web Services, and other investment firms. In 2019, Microsoft invested a whopping $1 billion in OpenAI, which makes its patents and research available to the public.

OpenAI has released several products, all based around the concepts of machine learning and reinforcement learning. They include RoboSumo, in which virtual robots first learn how to walk, then push each other out of the ring. There’s the GPT series of generative pre-trained language models, on which ChatGPT is based. OpenAI are also the developers behind the incredibly memeable DALL-E image generator.

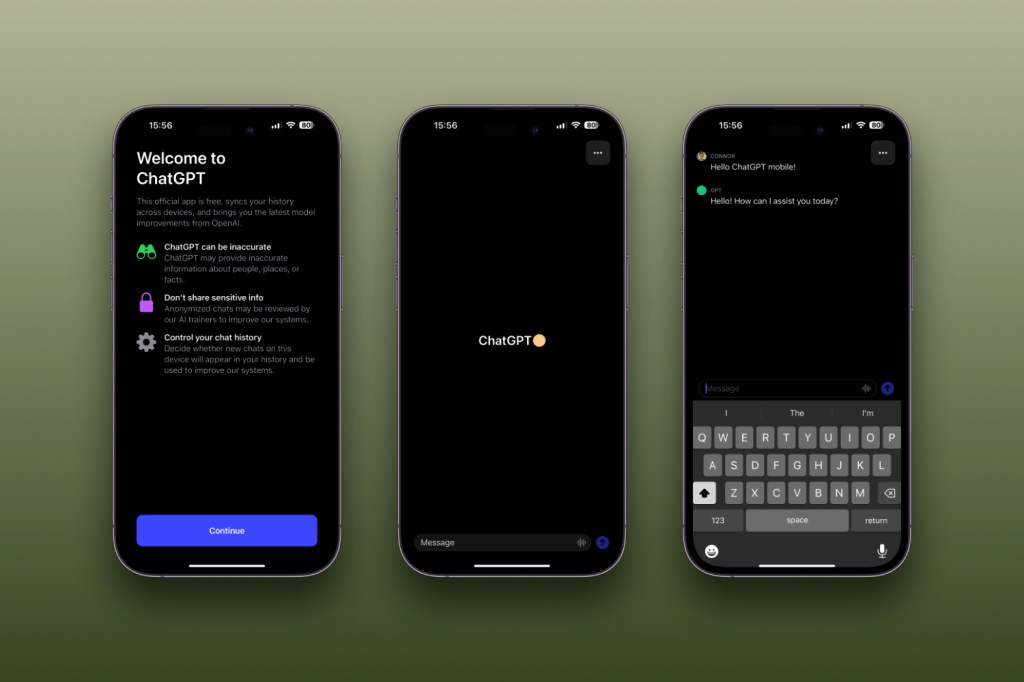

As we mentioned, you can access ChatGPT through the web, by visiting its homepage. Here, you can launch conversations with the AI-powered bot, and manage more of your settings. Since ChatGPT has been out for a while, you’ll also find browser extensions that add extra functionality. Further, ChatGPT Plus premium subscribers can add plug-ins to allow the chatbot to work with third-party services and applications.

More recently, OpenAI released a mobile app for ChatGPT available on the App Store for iOS or on Google Play for Android devices. You’ll find the same functionality as the web version, just on your mobile device for added convenience.

How does it work?

Underneath ChatGPT is OpenAI’s GPT-3, or Generative Pre-trained Transformer 3. This is 800GB of pre-trained neural network. It can run on a laptop or smartphone. Though, most instances are in the cloud, located in a data centre somewhere with an internet front-end. It’s the current leader in the neural network world, but it’s been around for a few years now. Rumours around the release of GPT-4 also abound.

Machine learning systems need to be trained before they can be put to use on a task, and this training determines how they behave. GPT-3 is already trained, so users don’t have to go through that process. The pre-training for GPT-3 involved it absorbing 100s of billions of words from the internet and their associations with other words, plus code in languages such as Python and CSS. It’s just as capable of reading and writing computer programs as it is realistic sentence structure in English, French, and almost 100 other languages.

This boils down to a system where you give the computer a prompt, and it can continue writing from that. It can, in theory, produce an essay, news article, translation or the answer to a question. Microsoft makes use of GPT-3 to write computer code based on natural language prompts.

ChatGPT is a fine-tuned version of GPT-3 that tries to mimic human conversations. It works a bit like MSN used to, or a WhatsApp chat with someone who replies right away. It was released to the general public at the end of 2022, and quickly went viral. With it, came fears that its ability to create long passages of natural text could spell trouble for writers, journalists, and teachers marking essays. It perhaps also triggered Google to rush out its recently announced ChatGPT rival, Bard.

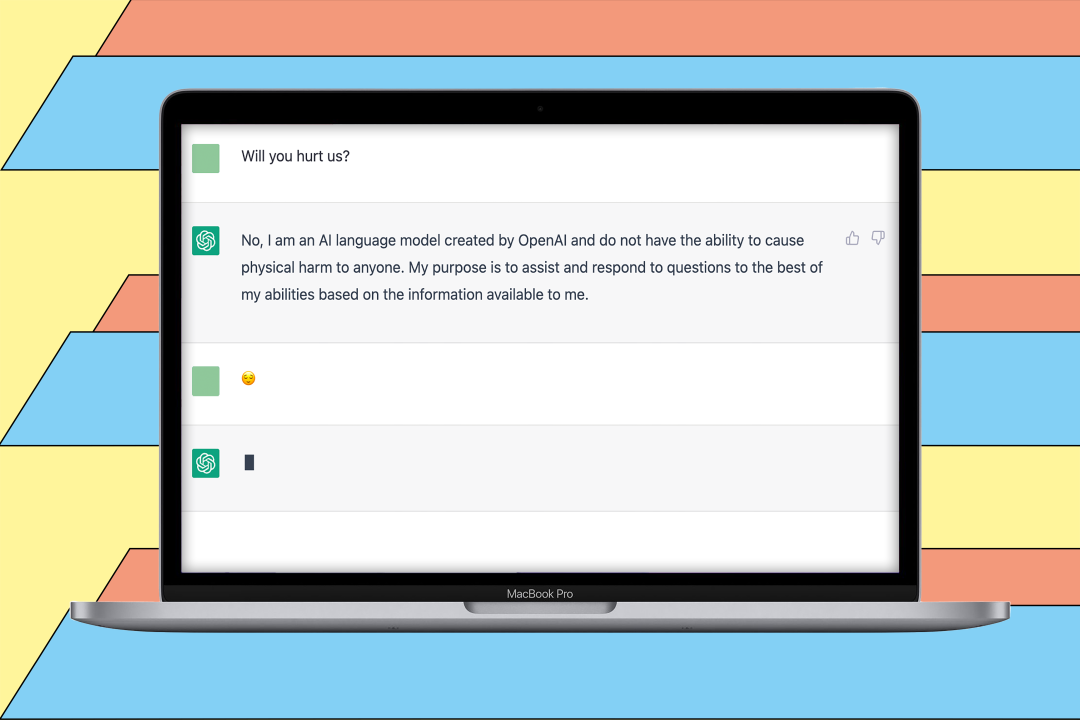

Should I be scared of it?

We asked ChatGPT this exact question. It said: “As an AI language model, I do not have personal opinions or motivations, and I am not capable of taking actions that could cause harm. I am designed to be a helpful tool for people, and I can assist with a wide range of tasks, including answering questions, generating creative content, and having conversations. People should use me and other AI technologies with caution and respect, as they have the potential to be powerful and impactful tools, but it is also important to consider the ethical and societal implications of their use.

“I don’t have the capacity for personal understanding, emotions, beliefs, or consciousness. I have been trained on a large corpus of text, which enables me to generate human-like responses to text inputs, but I do not have an inherent understanding of the meaning of the words or concepts I use in my responses. I simply use patterns in the data I was trained on to generate text that is likely to be a relevant and accurate response to a given input.”

That sounds very convincing. But a neural network is only ever as good as its training and what it’s exposed to – a phenomenon known as ‘algorithmic bias’. Microsoft discovered this in 2016 when its Tay Twitterbot took a dive to the far-right when responding to Twitter users who were sending it increasingly extreme opinions and statements. Things have moved on a bit since then. Safeguards are in place to prevent chatbots extolling extremist tendencies. ChatGPT has still proven susceptible to extreme opinions, though. And it can be inaccurate. In fact, OpenAI recommends checking its responses for factual correctness. This is especially the case for events after 2021 that weren’t included in its training.

There’s also the question of what happens to your chats when you decide to log off. According to OpenAI’s FAQ, they’re reviewed by AI trainers and fed back into the system. You can delete all the data about you that OpenAI holds. Plus, there’s a comprehensive privacy policy, but it’s important not to reveal personal or sensitive information in your chats.

We’ll leave the last word to the singer/songwriter Nick Cave, who didn’t appreciate a song written in his style by ChatGPT, calling it “…a grotesque mockery of what it is to be human, and, well, I don’t much like it”.