What is Google Duplex?

Reckon it’s not good to talk? Then have Google’s AI make your calls instead of you

Computers are dumb – and they sound dumb. It’s a far cry from science fiction, where people chat to robot pals like they’re regular folks who happen to be encased in metal. Google Duplex, announced at Google I/O, is a step towards that better future. Ish.

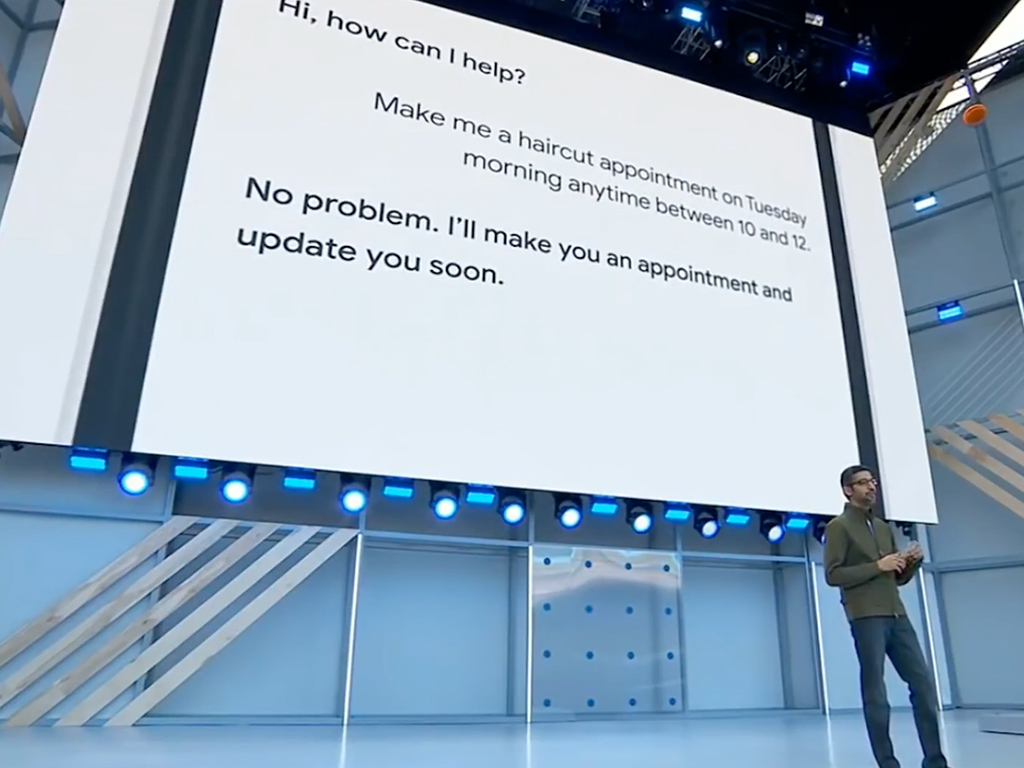

Rather than a chum for chats, though, Google Duplex is about having Google Assistant make bookings on your behalf. It brings together the company’s investments in natural language, deep learning and text-to-speech, in a mix of convenience and dubious morals that only modern technology can offer.

What is Google Duplex?

For Google, it’s a solution to people having to make phone calls, due to companies lacking online booking and interaction facilities. Initially, it’s primarily intended to set up appointments and place orders.

Google explains the system’s core is a recurrent neural network designed to cope with the challenges of such chats. It’s been trained on countless anonymised phone calls, and understands the nuances of conversation.

The breakthrough is in how it interacts. Latency is low, and Google Duplex understands context, adjusting conversations accordingly. This means people can talk to it naturally, like they would to a human being.

There is the odd tell – Google Duplex during demos uttered an identical “mm-hmm” during a number of conversational lulls. But speech disfluencies are used intelligently, adding the odd “um” or “er” when the system’s processing, like a person gathering their thoughts.

How does Google Duplex work?

For the person triggering an action, the process is almost identical to any other Google Assistant interaction. But because Google hasn’t built a TARDIS into Google Duplex, it must make the call to deal with a request, which takes longer than providing a weather forecast from readily accessible online data.

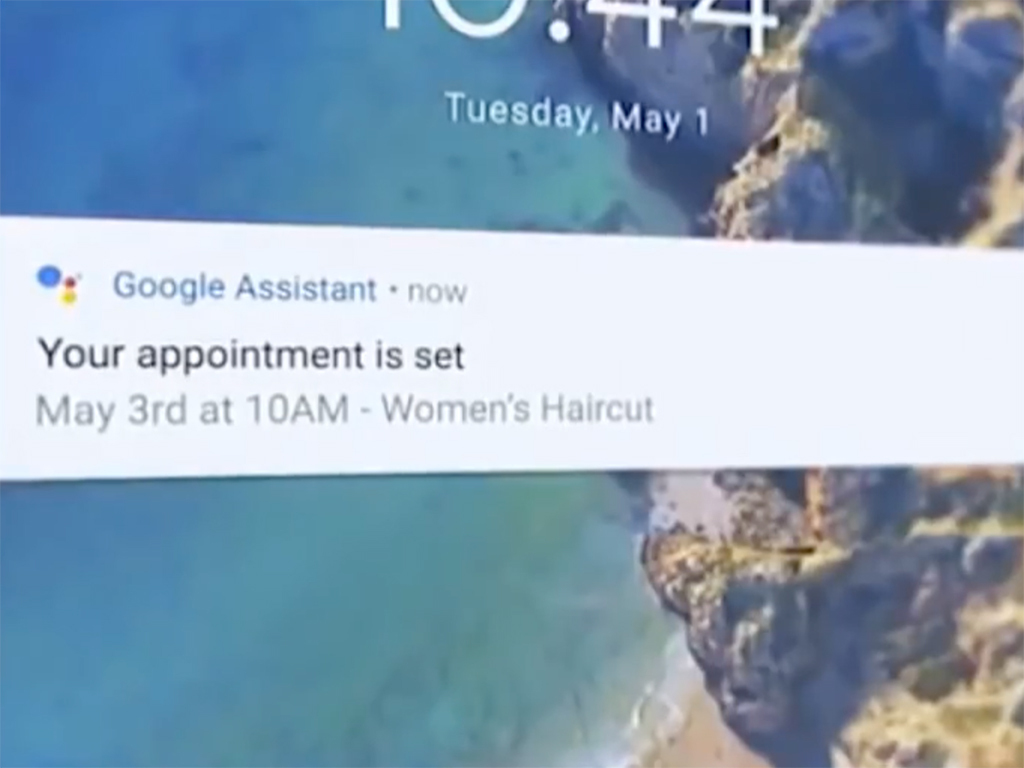

However, the process happens in the background. Ask Google Duplex to book you a haircut and it will do so while you get on with other things. Once the call’s complete, you’ll get a notification about how things were resolved.

For the person on the receiving end, the Google I/O demos suggest they’ll have no idea they’re talking to a machine, due to Google Duplex’s natural language smarts.

What are the benefits?

For Google, it’s a great way to get data. Google Duplex can make enquiries to companies about things like irregular store opening hours during holidays. The resulting information can then be made available online. The company claimed this could benefit businesses, through reducing related call volumes from the general public.

For individuals armed with Google Duplex, it’s more about saving time, in the sense you don’t have to make a mundane phone call, and the person on the other end should be none the wiser.

Isn’t that a bit ethically dubious?

There was a whiff of that during the demos – a sense Google was experimenting on service workers, tricking them into thinking they were dealing with a person. It also suggests interacting with people is somehow below certain individuals, which doesn’t seem like a good place to be.

The lack of disclosure is a problem, setting Google Duplex apart from the Turing Test, where those involved always know they are trying to distinguish between a human and a machine. Google Duplex is deliberately designed to hide that fact.

There are other concerns. When the tech fails, who’ll get the blame? You weren’t on the call, remember. It’s easy to imagine people fuming at a company, revenge-spamming appointments when things don’t go to plan – or, worse, companies armed with Duplex-capable machines using them for human-sounding robo-calls.

Then there’s the snag that at some point there will be bots on both ends of all calls, who’ll figure out they’re doing all this grunt work for humans who think it’s beneath them. This is how Skynet starts.

Er, right. So when’s it coming?

Google Duplex will be rolled out as an experiment this summer, with Google testing the technology within Google Assistant. However, the company’s acknowledged the mixed response to the demo, and stresses things may change from what’s been shown so far.

For example, Google is reportedly planning to add built-in disclosure to inform people they’re dealing with a machine, and dialling down the ums, ahhs, and other noises.

Mm-hmm.