No more freedom to tinker: why Apple locking down OS X system tweaks is a bad thing

Craig Grannell laments Apple’s increasingly locked down platforms, which block people figuring out how stuff works

In a sense, home computing can be split into two eras: ‘Tinkering Allowed’ and ‘Tinkering Totally Banned And What Are You Doing Poking Around This Thing Anyway, Stupid Human?’

Right now, we’re immersed in a kind of grey area between the two, but heading towards the latter, shortly before our piles of Windows, Android and Apple kit gain sentience, subsequently rising up to destroy us all.

Back in the 1980s and 1990s, it was a gentler, less dystopian, and more flexible time. Tinkering and wanting to tinker was second nature to anyone with a home computer. And this was quite interesting when you consider the path most people took during ownership of these devices.

Home computers would often be bought by a parent suckered in by advertising. You can use a ZX Spectrum to calculate home expenses! A Commodore 64 is perfect for running a home business! The BBC Micro will be a fun way for your kids to do their homework! Right. All anyone really wanted to do was play games.

Sooner or later, though, some people would get curious whether the command prompt that appeared on starting up a computer could do more than load in a blocky virtual world. Eventually, you realised you could make your own.

Perhaps you’d only get as far as a smidgeon of BASIC, or zip a mangled sprite around the screen, but it was something you’d created yourself, on a computer.

Eventually, the gaming space was locked down as consoles became dominant. You couldn’t muck about with a SNES any more than you could get your washing machine cheerfully singing a soapy rendition of the latest Prodigy single.

PCs and Macs, though, remained a place where you could still tinker. Add-ons could be welded to operating systems, icons could be radically altered, themes could be applied, and underlying system components could be tweaked. A generation was taught to continue experimenting, creating and customising.

Then the iPhone and iPad arrived, effectively the games consoles of computers. These were closed boxes, glass and aluminium appliances capable of amazing things, but hostile to the idea individual users might want to think different. Now the Mac is set to become the iPad of computers.

In the next OS X update, ‘El Capitan’, Apple’s ushering in a feature-cum-policy called System Integrity Protection. Primarily about enhancing security, the knock-on effect will be further erosion of tinkering and tweaking.

Popular utilities that modify system behaviour will cease to exist; Finder and Dock will be protected; even icons will be locked down, lest you have the audacity to want to replace one with something more creative. This of course aligns with Apple’s appliance mentality for hardware, with most Macs now not even allowing you to add RAM, let alone replace other components.

Two touchpads and one joystick?! › Steam Controller hands-on review

Given technology’s progression, perhaps this was inevitable. As computing continues its shift from niche concern to a kind of ubiquity, things must ‘just work’ and be more secure — denying access and locking things down potentially helps with both.

But it’s hard to believe people en masse have become less curious, and sad to note many got their start in creative and computing fields by messing about with stuff and learning how it worked. Now on the Mac they in many cases won’t be able to.

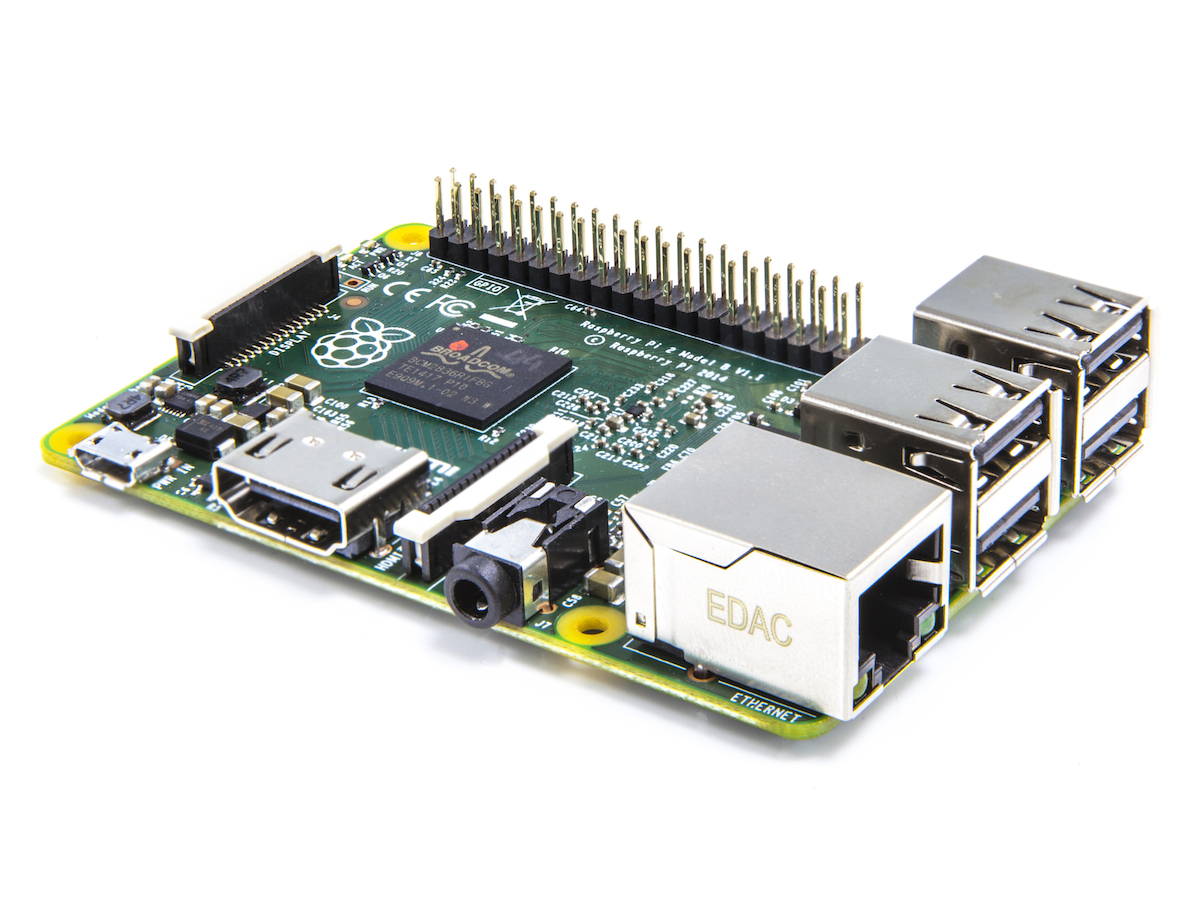

There are workarounds with OS X, but they’re unfriendly to the point only the most dedicated and desperate will implement them; and while there’s hope in powerful hobbyist platforms like the Raspberry Pi, there’s concern that where Apple leads, others in the industry — notably, Windows and Android — ultimately follow.

Perhaps something will eventually give. If not, it paints a bleak future for technology if the computers we use daily are no more accessible than the white goods in your kitchen.

And if they do all rise up, Skynet-style, we’re done for if we can’t pacify psychotic Macs, crazed PCs and deranged mobile devices by cunningly swapping all their anger-infused innards with endless loops of ocean sounds and zen gardens.

RELATED › 5 of the best Raspberry Pi projects